Preface

Every JPA application that requires some kind of dynamic queries for e.g. filtering has to decide between duplicating parts of queries or building queries conditionally. JPA offers the Criteria API for constructing such dynamic queries, but using this API often results in unreadable and hard to maintain code. Concatenating query string parts is often an alternative that might even work for simple cases, but quickly falls apart in many real world scenarios. Implementing pagination with JPA and especially when fetching collections is hard to do efficiently and often sub-optimal ways are chosen for keeping maintainability.

Blaze Persistence is a library that lives on top of a JPA provider and tries to solve these and many more problems a developer faces when having complex requirements. It is composed of multiple modules that all depend on the core module which this documentation deals with.

The core module tries to ease the pain of writing dynamic queries by offering a fluent builder API that puts readability first. In addition to that, it also integrates deeply with the JPA provider to provide advanced SQL features that not even the JPA providers offer. The deep integration makes it possible to even workaround some known JPA provider bugs.

The entity view module builds on top of the core module and provides a way to define DTOs with mappings to the entity model. The mapping information is used in the query builder to generate projections that perfectly fit the DTO structure along with possible required joins.

The jpa-criteria module is an implementation of the JPA Criteria API based on the query builder of the core module. It offers extensions to the JPA Criteria API that enable the use of some of the concepts and advanced features that are also offered by the core module. The main intent of this module is to ease the migration of existing queries or to allow the use of advanced features in existing queries on a case by case basis.

Relation to JPA and implementations

You can view the Blaze Persistence core module as being a builder for query objects similar to the JPA Criteria API. The builder generally tries to check correctness as early as possible, but defers some checks to query generation time which allows to write query building code that looks almost like JPQL.

Behind the scenes Blaze Persistence core generates a JPQL query or a provider native query string. When advanced features like e.g. CTEs are used, the query string represents the logical query structure and looks very much like a possible future revision of JPQL.

The developers of Blaze Persistence see entity views as a better alternative to JPA 2.1 entity graphs which is why there is no special support for entity graphs. Nevertheless, using entity graph with queries produced by Blaze Persistence shouldn’t be a problem as long as no advanced features are used and can be applied as usual via query hints. Also note that entity graphs require a JPA 2.1 implementation whereas entity views also work with a provider that only implements JPA 2.0.

System requirements

Blaze Persistence core requires at least Java 1.7 and at least a JPA 2.0 implementation.

1. Getting started

This is a step-by-step introduction about how to get started with the core module of Blaze Persistence.

1.1. Setup

Every release comes with a distribution bundle named like blaze-persistence-dist-VERSION. This distribution contains the required artifacts for the Blaze Persistence core module as well as artifacts for integrations and other modules.

-

required - The core module artifacts and dependencies

-

entity-view - The entity view module artifacts and dependencies

-

jpa-criteria - The jpa-criteria module artifacts and dependencies

-

integration/hibernate - The integrations for various Hibernate versions

-

integration/datanucleus - The integration for DataNucleus

-

integration/eclipselink - The integration for EclipseLink

-

integration/openjpa - The integration for OpenJPA

-

integration/entity-view - Integrations for the entity view module

-

integration/querydsl - Integrations for using Blaze-Persistence through a QueryDSL like API

-

integration/quarkus - Integration for using Blaze-Persistence with Quarkus

The required artifacts are always necessary. Every other module builds up on that. Based on the JPA provider that is used, one of the integrations should be used. Other modules are optional and normally don’t have dependencies on each other.

For QueryDSL users there is an integration that is described in the QueryDSL integration chapter.

1.1.1. Maven setup

We recommend you introduce a version property for Blaze Persistence which can be used for all artifacts.

<properties>

<blaze-persistence.version>1.5.1</blaze-persistence.version>

</properties>

The required dependencies for the core module are

<dependency>

<groupId>com.blazebit</groupId>

<artifactId>blaze-persistence-core-api</artifactId>

<version>${blaze-persistence.version}</version>

<scope>compile</scope>

</dependency>

<dependency>

<groupId>com.blazebit</groupId>

<artifactId>blaze-persistence-core-impl</artifactId>

<version>${blaze-persistence.version}</version>

<scope>runtime</scope>

</dependency>

Depending on the JPA provider that should be used, one of the following integrations is required

Hibernate 5.4

<dependency>

<groupId>com.blazebit</groupId>

<artifactId>blaze-persistence-integration-hibernate-5.4</artifactId>

<version>${blaze-persistence.version}</version>

<scope>runtime</scope>

</dependency>

Hibernate 5.3

<dependency>

<groupId>com.blazebit</groupId>

<artifactId>blaze-persistence-integration-hibernate-5.3</artifactId>

<version>${blaze-persistence.version}</version>

<scope>runtime</scope>

</dependency>

Hibernate 5.2

<dependency>

<groupId>com.blazebit</groupId>

<artifactId>blaze-persistence-integration-hibernate-5.2</artifactId>

<version>${blaze-persistence.version}</version>

<scope>runtime</scope>

</dependency>

Hibernate 5+

<dependency>

<groupId>com.blazebit</groupId>

<artifactId>blaze-persistence-integration-hibernate-5</artifactId>

<version>${blaze-persistence.version}</version>

<scope>runtime</scope>

</dependency>

Hibernate 4.3

<dependency>

<groupId>com.blazebit</groupId>

<artifactId>blaze-persistence-integration-hibernate-4.3</artifactId>

<version>${blaze-persistence.version}</version>

<scope>runtime</scope>

</dependency>

Hibernate 4.2

<dependency>

<groupId>com.blazebit</groupId>

<artifactId>blaze-persistence-integration-hibernate-4.2</artifactId>

<version>${blaze-persistence.version}</version>

<scope>runtime</scope>

</dependency>

Datanucleus 5.1

<dependency>

<groupId>com.blazebit</groupId>

<artifactId>blaze-persistence-integration-datanucleus-5.1</artifactId>

<version>${blaze-persistence.version}</version>

<scope>runtime</scope>

</dependency>

Datanucleus 4 and 5

<dependency>

<groupId>com.blazebit</groupId>

<artifactId>blaze-persistence-integration-datanucleus</artifactId>

<version>${blaze-persistence.version}</version>

<scope>runtime</scope>

</dependency>

EclipseLink

<dependency>

<groupId>com.blazebit</groupId>

<artifactId>blaze-persistence-integration-eclipselink</artifactId>

<version>${blaze-persistence.version}</version>

<scope>runtime</scope>

</dependency>

OpenJPA

<dependency>

<groupId>com.blazebit</groupId>

<artifactId>blaze-persistence-integration-openjpa</artifactId>

<version>${blaze-persistence.version}</version>

<scope>runtime</scope>

</dependency>

QueryDSL integration

When you work with QueryDSL you can additionally have first class integration by using the following dependencies.

<dependency>

<groupId>com.blazebit</groupId>

<artifactId>blaze-persistence-integration-querydsl-expressions</artifactId>

<version>${blaze-persistence.version}</version>

<scope>compile</scope>

</dependency>

1.2. Environments

Blaze Persistence is usable in Java EE, Spring as well as in Java SE environments.

1.2.1. Java SE

An instance of CriteriaBuilderFactory can be obtained as follows:

CriteriaBuilderConfiguration config = Criteria.getDefault(); // optionally, perform dynamic configuration CriteriaBuilderFactory cbf = config.createCriteriaBuilderFactory(entityManagerFactory);

The Criteria.getDefault() method uses the java.util.ServiceLoader to locate

the first implementation of CriteriaBuilderConfigurationProvider on the classpath

which it uses to obtain an instance of CriteriaBuilderConfiguration.

The CriteriaBuilderConfiguration instance also allows dynamic configuration of the

factory.

The CriteriaBuilderFactory should only be built once.

|

| Creating the criteria builder factory eagerly at startup is required so that the integration can work properly. Initializing it differently might result in data races because at creation time e.g. custom functions are registered. |

1.2.2. Java EE

The most convenient way to use Blaze Persistence within a Java EE environment is by using a startup EJB and a CDI producer.

@Singleton // From javax.ejb

@Startup // From javax.ejb

public class CriteriaBuilderFactoryProducer {

// inject your entity manager factory

@PersistenceUnit

private EntityManagerFactory entityManagerFactory;

private CriteriaBuilderFactory criteriaBuilderFactory;

@PostConstruct

public void init() {

CriteriaBuilderConfiguration config = Criteria.getDefault();

// do some configuration

this.criteriaBuilderFactory = config.createCriteriaBuilderFactory(entityManagerFactory);

}

@Produces

@ApplicationScoped

public CriteriaBuilderFactory createCriteriaBuilderFactory() {

return criteriaBuilderFactory;

}

}

1.2.3. CDI

If EJBs aren’t available, the CriteriaBuilderFactory can also be configured in a CDI 1.1 specific way by creating a simple producer method like the following example shows.

@ApplicationScoped

public class CriteriaBuilderFactoryProducer {

// inject your entity manager factory

@PersistenceUnit

private EntityManagerFactory entityManagerFactory;

private volatile CriteriaBuilderFactory criteriaBuilderFactory;

public void init(@Observes @Initialized(ApplicationScoped.class) Object init) {

// no-op to force eager initialization

}

@PostConstruct

public void createCriteriaBuilderFactory() {

CriteriaBuilderConfiguration config = Criteria.getDefault();

// do some configuration

this.criteriaBuilderFactory = config.createCriteriaBuilderFactory(entityManagerFactory);

}

@Produces

@ApplicationScoped

public CriteriaBuilderFactory createCriteriaBuilderFactory() {

return criteriaBuilderFactory;

}

}

1.2.4. Spring

Within a Spring application the CriteriaBuilderFactory can be provided for injection like this.

@Configuration

public class BlazePersistenceConfiguration {

@PersistenceUnit

private EntityManagerFactory entityManagerFactory;

@Bean

@Scope(ConfigurableBeanFactory.SCOPE_SINGLETON)

@Lazy(false)

public CriteriaBuilderFactory createCriteriaBuilderFactory() {

CriteriaBuilderConfiguration config = Criteria.getDefault();

// do some configuration

return config.createCriteriaBuilderFactory(entityManagerFactory);

}

}

1.3. Supported Java runtimes

All projects are built for Java 7 except for the ones where dependencies already use Java 8 like e.g. Hibernate 5.2, Spring Data 2.0 etc. So you are going to need at least JDK 8 for building the project.

We also support building the project with JDK 9 and try to keep up with newer versions. Currently, we support building the project with Java 8 - 14. If you want to run your application on a Java 9+ JVM you need to handle the fact that JDK 9+ doesn’t export some APIs like the JAXB, JAF, javax.annotations and JTA anymore. In fact, JDK 11 removed these modules so command line flags that are sometimes advised to add modules to the classpath won’t work.

Since libraries like Hibernate and others require these APIs you need to make them available. The easiest way to get these APIs back on the classpath is to package them along with your application. This will also work when running on Java 8. We suggest you add the following dependencies.

<dependency>

<groupId>javax.xml.bind</groupId>

<artifactId>jaxb-api</artifactId>

<version>2.2.11</version>

</dependency>

<dependency>

<groupId>com.sun.xml.bind</groupId>

<artifactId>jaxb-core</artifactId>

<version>2.2.11</version>

</dependency>

<dependency>

<groupId>com.sun.xml.bind</groupId>

<artifactId>jaxb-impl</artifactId>

<version>2.2.11</version>

</dependency>

<dependency>

<groupId>javax.transaction</groupId>

<artifactId>javax.transaction-api</artifactId>

<version>1.2</version>

<!-- In a managed environment like Java EE, use 'provided'. Otherwise use 'compile' -->

<scope>provided</scope>

</dependency>

<dependency>

<groupId>javax.activation</groupId>

<artifactId>activation</artifactId>

<version>1.1.1</version>

<!-- In a managed environment like Java EE, use 'provided'. Otherwise use 'compile' -->

<scope>provided</scope>

</dependency>

<dependency>

<groupId>javax.annotation</groupId>

<artifactId>javax.annotation-api</artifactId>

<version>1.3.2</version>

<!-- In a managed environment like Java EE, use 'provided'. Otherwise use 'compile' -->

<scope>provided</scope>

</dependency>

Automatic module names for modules.

| Module | Automatic module name |

|---|---|

Core API |

com.blazebit.persistence.core |

Core Impl |

com.blazebit.persistence.core.impl |

Core Parser |

com.blazebit.persistence.core.parser |

JPA Criteria API |

com.blazebit.persistence.criteria |

JPA Criteria Impl |

com.blazebit.persistence.criteria.impl |

JPA Criteria JPA2 Compatibility |

com.blazebit.persistence.criteria.jpa2compatibility |

1.4. Supported environments/libraries

The bare minimum is JPA 2.0. If you want to use the JPA Criteria API module, you will also have to add the JPA 2 compatibility module. Generally, we support the usage in Java EE 6+ or Spring 4+ applications.

The following table outlines the supported library versions for the integrations.

| Module | Automatic module name | Minimum version | Supported versions |

|---|---|---|---|

Hibernate integration |

com.blazebit.persistence.integration.hibernate |

Hibernate 4.2 |

4.2, 4.3, 5.0, 5.1, 5.2, 5.3, 5.4 (not all features are available in older versions) |

EclipseLink integration |

com.blazebit.persistence.integration.eclipselink |

EclipseLink 2.6 |

2.6 (Probably 2.4 and 2.5 work as well, but only tested against 2.6) |

DataNucleus integration |

com.blazebit.persistence.integration.datanucleus |

DataNucleus 4.1 |

4.1, 5.0 |

OpenJPA integration |

com.blazebit.persistence.integration.openjpa |

N/A |

(Currently not usable. OpenJPA doesn’t seem to be actively developed anymore and no users asked for support yet) |

1.5. First criteria query

This section is supposed to give you a first feeling of how to use the criteria builder. For more detailed information, please see the subsequent chapters.

In the following we suppose cbf and em to refer to an instance of CriteriaBuilderFactory

and JPA’s EntityManager, respectively.

Take a look at the environments chapter for how to obtain a CriteriaBuilderFactory.

|

Let’s start with the simplest query possible:

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class);

This query simply selects all Cat objects and is equivalent to following JPQL query:

SELECT c FROM Cat c

Once the create() method is called the expression

returns a CriteriaBuilder<T> where T is specified via the second parameter of the

create() method and denotes the result type of the query.

The default behavior of create() is that the result type

is assumed to be the entity class from which to select. So if we would like to only select the cats' age we would have to write:

CriteriaBuilder<Integer> cb = cbf.create(em, Integer.class)

.from(Cat.class)

.select("cat.age");

Here we can see that the criteria builder assigns a default alias (the simple lower-case name of the entity class)

to the entity class from which we select (root entity) if we do not specify one. If we want to save some

writing, both the create() and

the from() method allow the specification of a custom alias for the root entity:

CriteriaBuilder<Integer> cb = cbf.create(em, Integer.class)

.from(Cat.class, "c")

.select("c.age");

Next we want to build a more complicated query. Let’s select all cats with an age between 5 and 10 years and with at least two kittens. Additionally, we would like to order the results by name ascending and by id in case of equal names.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "c")

.where("c.age").betweenExpression("5").andExpression("10")

.where("SIZE(c.kittens)").geExpression("2")

.orderByAsc("c.name")

.orderByAsc("c.id");

We have built a couple of queries so far but how can we retrieve the results? There are two possible ways:

-

List<Cat> cats = cb.getResultList();to retrieve all results -

PagedList<Cat> cats = cb.page(0, 10).getResultList();to retrieve 10 results starting from the first result (you must specify at least one unique column to determine the order of results)The

PagedList<Cat>features thegetTotalSize()method which is perfectly suited for displaying the results in a paginated table. Moreover thegetKeysetPage()method can be used to switch to keyset pagination for further paging.

2. Architecture

This is just a high level view for those that are interested about how Blaze Persistence works.

2.1. Interfaces

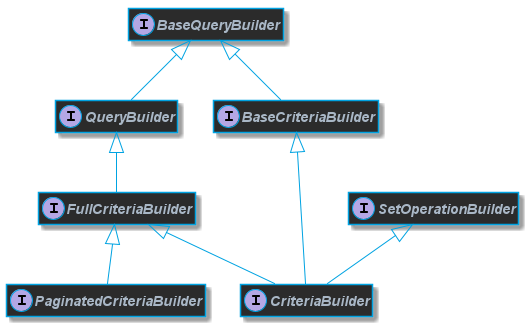

A quick overview that presents the interfaces that are essential for users and how they are related.

2.1.1. Basic functionality

Blaze Persistence has a builder API for building JPQL queries in a comfortable fashion.

The most important interfaces that a user should be concerned with are

The functionalities of the query builders are separated into base interfaces to avoid duplication where possible.

All functionality for the WHERE-clause for example can be found in com.blazebit.persistence.BaseWhereBuilder.

Analogous to that, there also exist interfaces for other clauses.

Unless some advanced features(e.g. CTEs) are used, the query string returned by every query builder is JPQL compliant and thus can also be directly compiled via EntityManager#createQuery(String).

In case of advanced features the query string that is returned might contain syntax elements which are not supported by JPQL. Some features like CTEs simply can not be modeled with JPQL,

therefore a syntax similar to SQL was used to visualize the query model. The query objects returned for such queries are custom implementations,

so beware that you can’t simply cast them to provider specific subtypes.

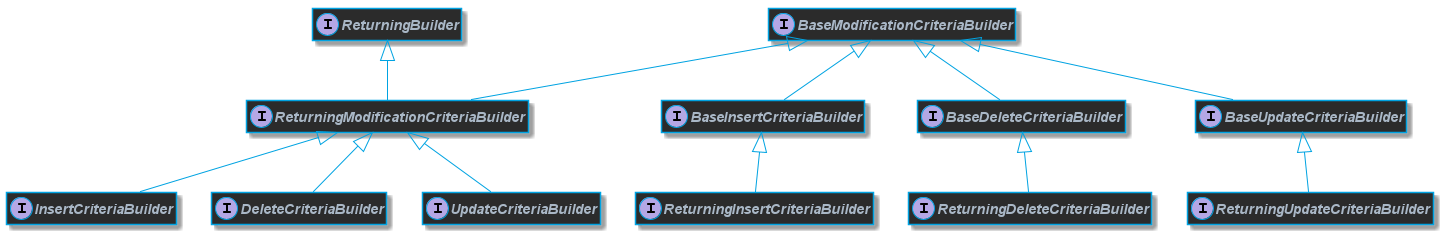

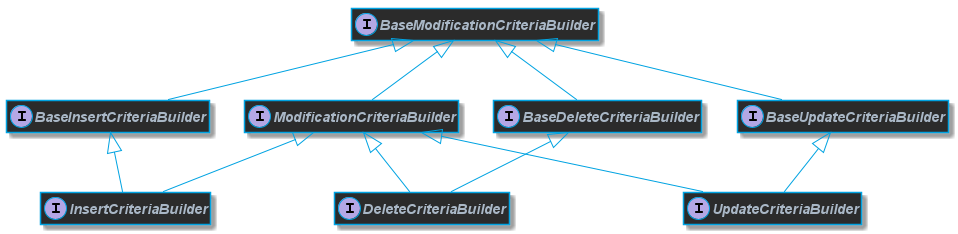

2.1.2. DML support

If a user uses Blaze Persistence for data manipulation too, then the following interfaces are unavoidable to know

Every interface has a dual partner interface prefixed with Returning that is relevant for data manipulation queries that return results.

The Returning interfaces are only relevant when using CTEs (Common Table Expressions)

|

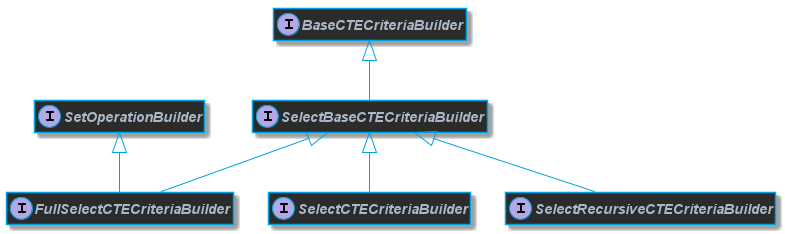

2.1.3. CTE support

CTE builders are split into two families of interface groups. One group is concerned with CTEs that do select queries, the other with DML queries.

Select CTE queries can either be recursive or non-recursive. Recursive CTEs always have a base part and a recursive part which is explicitly modeled in the API.

One starts with a com.blazebit.persistence.SelectRecursiveCTECriteriaBuilder for defining the base part

and then unions the recursive part of the query in a com.blazebit.persistence.SelectCTECriteriaBuilder.

The non-recursive builder is very similar but does not have an explicit notion of a base or recursive part. Although it supports set operations,

we do not recommend building recursive queries with the non-recursive builder especially because it’s not portable and less readable.

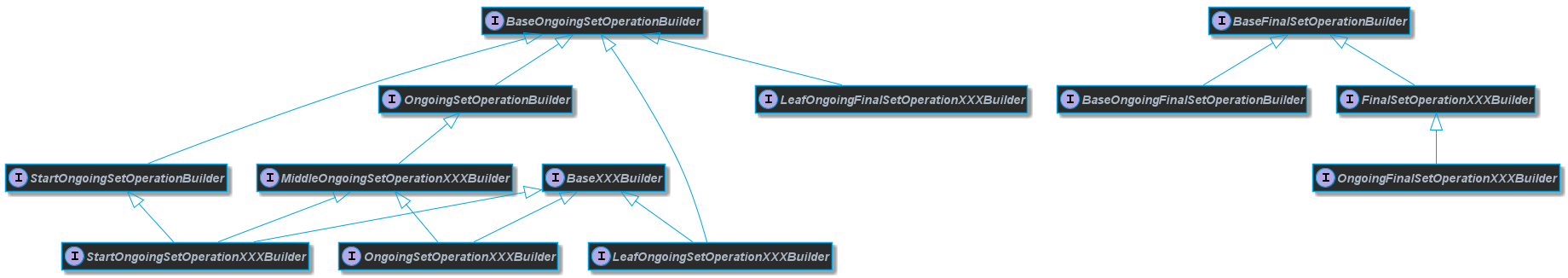

2.1.4. Set operations support

Every query builder has support for set operations as defined by the interface com.blazebit.persistence.SetOperationBuilder.

One can start a nested group of query builders concatenated with set operations. This group has to be ended and concatenated with another query build or another nested group.

When an empty set operation group is encountered during the query building, it is removed internally.

criteriaBuilder

.startSet(Cat.class) (1)

.startUnionAll() (2)

.endSetWith() (3)

.endSet() (4)

.unionAll() (5)

.endSet() (6)

.unionAll() (7)

.endSet() (8)

| 1 | Starting a builder with a nested set operation group returns StartOngoingSetOperationXXXBuilder |

| 2 | Starting any nested set operation group returns StartOngoingSetOperationXXXBuilder |

| 3 | Ending nested set operation group with endSetWith() to specify ordering and limiting returns OngoingFinalSetOperationXXXBuilder |

| 4 | Ending a nested set operation group with endSet() results in MiddleOngoingSetOperationXXXBuilder |

| 5 | Connecting a nested set operation group with a set operation results in OngoingSetOperationXXXBuilder |

| 6 | Ending a top level set operation nested group results in LeafOngoingFinalSetOperationXXXBuilder |

| 7 | Connecting a top level set operation group with a set operation results in LeafOngoingSetOperationXXXBuilder |

| 8 | Ending the top level set operation results in FinalSetOperationXXXBuilder |

Top-level query builder set operations

Invoking a set operation on a top level query builder results in a LeafOngoingSetOperationXXXBuilder type.

LeafOngoingSetOperationXXXBuilder types are the possible exit types for a top level set operation group.

Further connecting the builder via a set operation will produce a builder of the same type LeafOngoingSetOperationXXXBuilder.

criteriaBuilder.from(Cat.class)

.unionAll() (1)

| 1 | The set operation on a top level query builder produces LeafOngoingSetOperationXXXBuilder |

When ending such a builder via endSet(), a FinalSetOperationXXXBuilder is produced.

criteriaBuilder.from(Cat.class)

.unionAll()

.endSet() (1)

| 1 | The ending of a top level set operation builder produces FinalSetOperationXXXBuilder |

FinalSetOperationXXXBuilder types are the result of a top level set operation and once constructed only support specifying ordering or limiting.

Nested query builder set operations

Invoking a nested set operation on a query builder results in a StartOngoingSetOperationXXXBuilder type.

StartOngoingSetOperationXXXBuilder types represent a builder for a group of set operations within parenthesis.

With such a builder the normal query builder methods are available and additionally, it can end the group.

criteriaBuilder.from(Cat.class)

.startUnionAll() (1)

| 1 | The nested set operation on a query builder produces StartOngoingSetOperationXXXBuilder |

When connecting the builder with another set operation a OngoingSetOperationXXXBuilder is produced which essentially has the same functionality.

criteriaBuilder.from(Cat.class)

.startUnionAll()

.unionAll() (1)

| 1 | A set operation on a StartOngoingSetOperationXXXBuilder produces OngoingSetOperationXXXBuilder |

When ending such a top level nested builder via endSet(), a LeafOngoingFinalSetOperationXXXBuilder is produced.

criteriaBuilder.from(Cat.class)

.startUnionAll()

.endSet() (1)

| 1 | Results in LeafOngoingFinalSetOperationXXXBuilder |

Or when in a nested context, a MiddleOngoingSetOperationXXXBuilder is produced.

criteriaBuilder.from(Cat.class)

.startUnionAll()

.startUnionAll()

.endSet() (1)

| 1 | Results in MiddleOngoingSetOperationXXXBuilder |

The ending of the builder is equivalent to doing a closing parenthesis.

Since a nested group only makes sense when connecting the group with something else, the LeafOngoingFinalSetOperationXXXBuilder and MiddleOngoingSetOperationXXXBuilder only allow connecting

a new builder with a set operation or ending the whole query builder.

criteriaBuilder.from(Cat.class)

.startUnionAll()

.endSet()

.unionAll() (1)

| 1 | Results in LeafOngoingSetOperationXXXBuilder |

Or when in a nested context, a OngoingSetOperationXXXBuilder is produced.

criteriaBuilder.from(Cat.class)

.startUnionAll()

.startUnionAll()

.endSet()

.unionAll() (1)

| 1 | Results in OngoingSetOperationXXXBuilder |

Ending a nested group with endSetWith() allows to specify ordering and limiting for the group and returns a OngoingFinalSetOperationXXXBuilder.

criteriaBuilder.from(Cat.class)

.startUnionAll()

.endSetWith() (1)

| 1 | Results in OngoingFinalSetOperationXXXBuilder |

2.2. Query building

Every query builder has several clause specific managers that it delegates to. These managers contain the state for a clause and might interact with other clauses.

Depending on which query builder features are used, the query object that is produced by a query builder through getTypeQuery() or getQuery() is either the JPA provider’s native query or a custom query.

If no advanced features are used, nothing special happens. The query string is built and passed to EntityManager.createQuery() which is then returned.

When advanced features are used, an example query is built which most of the time is very similar to the original query except for advanced features.

This example query serves as a basis for execution of advanced SQL. It almost contains all the necessary parts, there is just some SQL that needs to be replaced.

If CTEs are involved, one query per CTE is built via the same mechanism and added to the participating queries list. This list is ordered and contains all query parts that are involved in an advanced query.

The ordering is important because in the end, parameters are positionally set in SQL and the order within the list represents the order of the query parts in the SQL.

All these query objects are then passed to a QuerySpecification which is capable of producing the SQL for the whole query from it’s query parts.

It serves as component that can be composed into a bigger query but also provides a method for creating a SelectQueryPlan or ModificationQueryPlan.

Such query plans represent the executable form of query specifications that are fixed. The reason for the separation between the two is that list parameters or calls to setFirstResult() and setMaxResults() could change the SQL.

The query specification is wrapped in an implementation of the JPA query interfaces javax.persistence.Query or javax.persistence.TypedQuery and a query plan is only created on demand just before executing.

Parameters, lock modes and flush modes are propagated to all necessary participating queries.

Set operations on top level queries essentially are special query specifications that contain multiple other query specifications.

To really execute such advanced queries, query plans use the ExtendedQuerySupport. It offers methods to run an JPA query with an SQL replacement and a list of participating queries.

The ExtendedQuerySupport is JPA provider specific and is responsible for proper query caching and giving access to SQL specifics of JPA query objects.

The integration of ObjectBuilder is done by introducing a query wrapper that takes results, passes them through the object builder and then returns the results.

2.3. JPA Provider Integration

The essential integration points with the JPA provider are encapsulated in EntityManagerFactoryIntegrator and ExtendedQuerySupport.

The EntityManagerFactoryIntegrator offers support for DBMS detection, function registration and

the construction of a JpaProvider through a JpaProviderFactory. The JpaProvider is a contract that can be used to query JPA provider specifics.

Some of those specifics are whether a feature like entity joins is supported but also metamodel specifics like whether an attribute has a join table.

The ExtendedQuerySupport is necessary for advanced SQL related functionality and might not be available for a JPA provider.

It provides access to SQL related information like the column names of an entity attribute or simply the SQL query for a JPA query.

3. From clause

The FROM clause contains the entities which should be queried.

Normally a query will have one root entity which is why Blaze Persistence offers a convenient factory for creating queries that select the root entity.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class);

The type Cat has multiple purposes in this case.

-

It defines the result type of the query

-

Creates an implicit query root with that type and the alias cat

-

Implicitly selects cat

This implicit logic will help to avoid some boilerplate code in most of the cases. The JPQL generated for such a simple query is just like you would expect

SELECT cat FROM Cat cat

As soon as a query root is added via from(), the implicitly created query root is removed.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class)

.from(Person.class, "person")

.select("person.kittens");

In such a query, the type Cat only serves the purpose of defining the query result type.

SELECT kittens_1 FROM Person person LEFT JOIN person.kittens kittens_1

Contrary to the described behavior, using the overload of the create method that allows to specify the alias for the query root will result in an explicit query root.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "myCat");

This is essentially a shorthand for

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class)

.from(Cat.class, "myCat");

A query can also have multiple root entities which are connected with the , operator that essentially has the semantics of a CROSS JOIN.

Beware that when having multiple root entities, path expression must use absolute paths.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "myCat")

.select("name");

The expression name in this case is interpreted as relative to the query root, so it is equivalent to myCat.name.

When having multiple query roots, the use of a relative path will lead to an exception saying that relative resolving is not allowed with multiple query roots!

3.1. Joins

JPQL offers support for CROSS, INNER, LEFT and RIGHT JOIN which are all well supported by Blaze Persistence.

In contrast to JPQL, Blaze Persistence also has a notion of implicit/default and explicit joins which makes it very convenient to write queries as can be seen a few sections later.

RIGHT JOIN support is optional in JPA so we recommend not using it at all.

|

| In addition to joins on mapped relations, Blaze Persistence also offers support for unrelated or entity joins offered by all major JPA providers. |

3.1.1. Implicit joins

An implicit or default join is a special join that can be referred to by

-

an absolute path from a root entity to an association

-

alias if an explicit alias has been defined via

joinDefault()means

| A path is considered absolute also if it is relative to the query root |

The following query builder will create an implicit join for the path kittens when inspecting the select clause and reuse that implicit join in the where clause because of the use of an absolute path.

CriteriaBuilder<Integer> cb = cbf.create(em, Integer.class)

.from(Cat.class)

.select("kittens.age")

.where("kittens.age").gt(1);

This will result in the following JPQL query

SELECT kittens_1.age FROM Cat cat LEFT JOIN cat.kittens kittens_1 WHERE kittens_1.age > 1

A relation dereference like alias.relation.property will always result in a JOIN being added for alias.relation.

The exception to that is when the accessed property is the identifier property of the type of relation and that identifier is owned by alias i.e. the column is contained in the owner’s table.

If the property is the identifier and the JpaProvider supports optimized id access,

no join is generated but instead the expression is rendered as it is alias.relation.identifier.

Model awareness

Implicit joins are a result of a path dereference or explicit fetching. A path dereference can happen in any place where an expression is expected.

An explicit fetch can be invoked on FullQueryBuilder instances which is the top type for

CriteriaBuilder and PaginatedCriteriaBuilder.

Every implicit join will result in a so called "model-aware" join. The model-awareness of a join is responsible for determining the join type to use.

Generally it is a good intuition to think of a model-aware join to always produce results, thus never restricting the result set but only extending it.

A model-aware join currently decides between INNER and LEFT JOIN. The INNER JOIN is only used if

-

The parent join is an

INNER JOIN -

The relation is non-optional e.g. the

optionalattribute of a@ManyToOneor@OneToOneis false

| This is different from how JPQL path expressions are normally interpreted but will result in a more natural output. |

If you aren’t happy with the join types you can override them and even specify an alias for implicit joins via the

joinDefault method and variants.

Consider the following example for illustration purposes of the implicit joins.

CriteriaBuilder<Integer> cb = cbf.create(em, Integer.class)

.from(Cat.class)

.select("kittens.age")

.where("kittens.age").gt(1)

.innerJoinDefault("kittens", "kitty");

The builder first creates an implicit join for kittens with the join type LEFT JOIN because a Collection can never be non-optional.

If you just had the SELECT clause, a NULL value would be produced for cats that don’t have kittens.

But in this case the WHERE clause filters out these cats, because any comparison with NULL will result in UNKNOWN and thus FALSE.

Null-aware predicates like IS NULL are obviously an exception to this.

|

The last statement will take the default/implicit join for the path kittens, set the join type to INNER and the alias to kitty.

| Although the generated aliases for implicit joins are deterministic, they might change over time so you should never use them to refer to implicit joins. Always use the full path to the join relation or define an alias and use that instead! |

3.1.2. Explicit joins

Explicit joins are different from implicit/default joins in a sense that they are only accessible through their alias. You can have only one default join which is identified by it’s absolute path, but multiple explicit joins as these are identified by their alias. This means that you can also join a relation multiple times with different aliases.

You can create explicit joins with the join() method and variants.

The following shows explicit and implicit joins used together.

CriteriaBuilder<Integer> cb = cbf.create(em, Integer.class)

.from(Cat.class)

.select("kittens.age")

.where("kitty.age").gt(1)

.innerJoin("kittens", "kitty");

This query will in fact create two joins. One for the explicitly inner joined kittens with the alias kitty and another for the implicitly left joined kittens used in the SELECT clause.

The resulting JPQL looks like the following

SELECT kittens_1.age FROM Cat cat INNER JOIN cat.kittens kitty LEFT JOIN cat.kittens kittens_1 WHERE kitty.age > 1

3.1.3. Fetched joins

Analogous to the FETCH keyword in JPQL, you can specify for every join node of a FullQueryBuilder if it should be fetched.

Every join() method variant comes with a partner method,

that does fetching for the joined path. In addition to that, there is also a simple fetch() method which can be provided with absolute paths to relations.

These relations are then implicit/default join fetched, i.e. a default join node with fetching enabled is created for every relation.

You can make use of deep paths like kittens.kittens which will result in fetch joining two levels of kittens.

|

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class)

.from(Cat.class)

.leftJoinFetch("father", "dad")

.whereOr()

.where("dad").isNull()

.where("dad.age").gt(1)

.endOr()

.fetch("kittens.kittens", "mother");

The father relation is left join fetched and given an alias which is then used in the WHERE clause. Two levels of kittens and the mother relation are join fetched.

SELECT cat FROM Cat cat LEFT JOIN FETCH cat.father dad LEFT JOIN FETCH cat.kittens kittens_1 LEFT JOIN FETCH kittens_1.kittens kittens_2 LEFT JOIN FETCH cat.mother mother_1 WHERE dad IS NULL OR dad.age > 1

| Although the JPA spec does not specifically allow aliasing fetch joins, every major JPA provider supports this. |

When doing a scalar select instead of a query root select, Blaze Persistence automatically adapts the fetches to the new fetch owners.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class)

.from(Cat.class)

.fetch("father.kittens")

.select("father");

In this case we fetch the father relation and the kittens of the father. By also selecting the father relation, the fetch owner changes from the query root to the father.

This has the effect, that the father is not fetch joined, as that would be invalid.

SELECT father_1 FROM Cat cat LEFT JOIN cat.father father_1 LEFT JOIN FETCH father_1.kittens kittens_1

3.1.4. Array joins

Array joins are an extension to the JPQL grammar which offer a convenient way to create joins with an ON clause condition.

An array join expression is a path expression followed by an opening bracket, the index expression and then the closing bracket e.g. arrayBase[indexPredicateOrExpression].

The type of the arrayBase expression must be an association. If it is an indexed List e.g. uses a @OrderColumn or a Map it is possible to use an expression.

In case of an indexed list, the type of the indexPredicateOrExpression must be numeric. For maps, the type must match the map key type as defined in the entity.

CriteriaBuilder<String> cb = cbf.create(em, String.class)

.from(Cat.class)

.select("localizedName[:language]")

.where("localizedName[:language]").isNotNull();

Such a query will result in the following JPQL

SELECT localizedName_language

FROM Cat cat

LEFT JOIN cat.localizedName localizedName_language

ON KEY(localizedName_language) = :language

WHERE localizedName_language IS NOT NULL

The relation localizedName is assumed to be a map of type Map<String, String> which maps a language code to a localized name.

The more general approach is to use a predicate expression that allows to filter an association.

CriteriaBuilder<String> cb = cbf.create(em, String.class)

.from(Cat.class)

.select("kittens[age > 18].id")

.select("kittens[age > 18].name");

Such a query will result in the following JPQL

SELECT kittens_age___18.id, kittens_age___18.name

FROM Cat cat

LEFT JOIN cat.kittens kittens_age___18

ON kittens_age___18.age > 18

Note that it is also possible to use an entity name as base expression for an array expression, which is called an entity array expression.

CriteriaBuilder<String> cb = cbf.create(em, String.class)

.from(Cat.class)

.select("Cat[age > 18].id")

.select("Cat[age > 18].name");

Such a query will result in the following JPQL

SELECT kittens_age___18.id, kittens_age___18.name

FROM Cat cat

LEFT JOIN Cat Cat___age___18

ON kittens_age___18.age > 18

| In case of array expressions, the generated implicit/default join node is identified not only by the absolute path, but also by the index expression. |

3.1.5. Correlated joins

JPQL allows subqueries to refer to a relation based on a join alias of the outer query within the from clause, also known as correlated join. A correlated join in Blaze Persistence can be done when initiating a subquery or be added as cross join to an existing subquery builder.

CriteriaBuilder<Long> cb = cbf.create(em, Long.class)

.from(Cat.class, "c")

.selectSubquery()

.from("c.kittens", "kitty")

.select("COUNT(kitty.id)")

.end();

Such a query will result in the following JPQL

SELECT

(

SELECT COUNT(kitty.id)

FROM c.kittens kitty

)

FROM Cat c

Although JPA does not mandate the support for subqueries in the SELECT clause, every major JPA provider supports it.

|

You can even use the OUTER function or macros within the correlation join path!

CriteriaBuilder<Long> cb = cbf.create(em, Long.class)

.from(Cat.class, "c")

.selectSubquery()

.from("OUTER(kittens)", "kitty")

.select("COUNT(kitty.id)")

.end();

This will result in the same JPQL as before as OUTER will refer to the query root of the outer query.

SELECT

(

SELECT COUNT(kitty.id)

FROM c.kittens kitty

)

FROM Cat c

3.1.6. Entity joins

An entity join is a type of join for unrelated entities, in the sense that no JPA mapping is required to join the entities. Entity joins are quite useful, especially when information from separate models(i.e. models that have no static dependency on each other) should be queried.

| Entity joins are only supported in newer versions of JPA providers(Hibernate 5.1+, EclipseLink 2.4+, DataNucleus 5+) |

Imagine a query that reports the count of people that are older than a cat for each cat

CriteriaBuilder<Long> cb = cbf.create(em, Long.class)

.from(Cat.class, "c")

.leftJoinOn(Person.class, "p")

.on("c.age").ltExpression("p.age")

.end()

.select("c.name")

.select("COUNT(p.id)")

.groupBy("c.id", "c.name");

The JPQL representation looks just as expected

SELECT c.name, COUNT(p.id)

FROM Cat c

LEFT JOIN Person p

ON c.age < p.age

GROUP BY c.id, c.name

Entity joins normally require a base alias but default to the query root when only a single query root is available.

INNER entity joins don’t need support from the JPA provider because these are rewritten to a JPQL compliant CROSS JOIN if necessary.

|

3.2. On clause

The ON clause is a filter predicate similar to the WHERE clause, but is evaluated while joining to restrict the joined elements.

In case of INNER joins the ON clause has the same effect as when putting the predicate into the WHERE clause.

However LEFT joins won’t filter out objects from the source even if the predicate doesn’t match any joinable object, but instead will produce a NULL value for the joined element.

The ON clause is used when using array joins to restrict the key of a join to the index expression.

Since the ON clause is only supported as of JPA 2.1, the usage with JPA 2.0 providers that have no equivalent vendor extension will fail.

|

The ON clause can be constructed by setting a JPQL predicate expression with setOnExpression() or by using the predicate builder>, Predicate Builder API.

| setOnExpression() | Predicate Builder API |

|---|---|

CriteriaBuilder<String> cb =

cbf.create(em, String.class)

.from(Cat.class)

.select("l10nName")

.leftJoinOn("localizedName", "l10nName")

.setOnExpression("KEY(l10nName) = :lang")

.where("l10nName").isNotNull();

|

CriteriaBuilder<String> cb =

cbf.create(em, String.class)

.from(Cat.class)

.select("l10nName")

.leftJoinOn("localizedName", "l10nName")

.on("KEY(l10nName)").eq(":lang")

.end()

.where("l10nName").isNotNull();

|

The resulting JPQL looks as expected

SELECT localizedNameForLanguage

FROM Cat cat

LEFT JOIN cat.localizedName l10nName

ON KEY(l10nName) = :lang

WHERE l10nName IS NOT NULL

3.3. VALUES clause

The VALUES clause is similar to the SQL VALUES clause in the sense that it allows to define a temporary set of objects for querying.

There are 3 different types of values for which a VALUES clause can be created

-

Basic values (Integer, String, etc.)

-

Managed values (Entities, Embeddables, CTEs)

-

Identifiable values (Entities, CTEs)

For query caching reasons, a VALUES clause has a fixed number of elements. If you bind a collection that has a smaller size, behind the scenes the rest is filled up with NULL values which are filtered out by a WHERE clause automatically.

Trying to bind a collection with a larger size will lead to an exception at bind time.

The VALUES clause is a feature that can be used for doing efficient batching. The number of elements can serve as batch size. Processing a collection iteratively and binding subsets to a query efficiently reuses query caches.

For one-shot or rarely executed queries it might not be necessary to implement batching.

In such cases use one of the overloads that use the collection size as number of elements.

The join alias that must be defined for a VALUES clause is reused as alias for the parameter to bind values.

CriteriaBuilder<String> cb = cbf.create(em, String.class)

.fromValues(String.class, "myValue", 10)

.select("myValue")

.setParameter("myValue", valueCollection);

For some cases it might be better to make use of entity functions instead of a VALUES

|

3.3.1. Basic values

The following basic value types are supported

-

Boolean -

Byte -

Short -

Integer -

Long -

Float -

Double -

Character -

String -

BigInteger -

BigDecimal -

java.sql.Time -

java.sql.Date -

java.sql.Timestamp -

java.util.Date -

java.util.Calendar

via the fromValues(Class elementType, String alias, int size) method.

Collection<String> valueCollection = Arrays.asList("value1", "value2");

CriteriaBuilder<String> cb = cbf.create(em, String.class)

.fromValues(String.class, "myValue", valueCollection)

.select("myValue");

The resulting logical JPQL doesn’t include individual parameters, but specifies the count of the values. The alias of the values clause from item also represents the parameter name.

SELECT myValue FROM String(2 VALUES) myValue

Behind the scenes, a type called ValuesEntity is used to be able to implement the VALUES clause.

For further information on TREAT functions, take a look at the JPQL functions chapter.

3.3.2. Non-Standard basic values

To support non-standard basic types the fromValues(Class entityType, String attribute, String alias, int size) method has to be used which will determine the proper SQL type based on the SQL type of the specified entity attribute.

Collection<String> valueCollection = Arrays.asList("value1", "value2");

CriteriaBuilder<String> cb = cbf.create(em, String.class)

.fromValues(Cat.class, "name", "myValue", valueCollection)

.select("myValue");

The logical JPQL encodes this as

SELECT myValue FROM String(2 VALUES LIKE Cat.name) myValue

3.3.3. Managed values

Managed values are objects of a JPA managed type i.e. entities or embeddables. A VALUES clause for such types will include all properties of that type,

so be careful when using this variant. For using only the id part of a managed type, take a look at the identifiable values variant.

If using all properties of an entity or embeddable is not appropriate for you, you should consider creating a custom CTE entity that covers only the subset of properties you are interested in

and finally convert your entity or embeddable object to that new type so it can be used with the VALUES clause.

Let’s look at an example

@Embeddable

class MyEmbeddable {

private String property1;

private String property2;

}

The embeddable defines 2 properties and a VALUES query for objects of that type might look like this

Collection<MyEmbeddable> valueCollection = ...

CriteriaBuilder<MyEmbeddable> cb = cbf.create(em, MyEmbeddable.class)

.fromValues(MyEmbeddable.class, "myValue", valueCollection)

.select("myValue");

The JPQL for such a query looks roughly like the following

SELECT myValue FROM MyEmbeddable(1 VALUES) myValue

3.3.4. Identifiable values

Identifiable values are also objects of a JPA managed type, but restricted to identifiable managed types i.e. no embeddables.

Every entity and CTE entity is an identifiable managed type and can thus be used in

fromIdentifiableValues().

The main difference to the managed values variant is that only the identifier properties of the objects are bound instead of all properties.

Let’s look at an example

Collection<Cat> valueCollection = ...

CriteriaBuilder<Long> cb = cbf.create(em, Long.class)

.fromIdentifiableValues(Cat.class, "cat", valueCollection)

.select("cat.id");

The JPQL for such a query looks roughly like the following

SELECT cat.id FROM Cat.id(1 VALUES) cat

The values parameter "cat" will still expect instances of the type Cat, but will only bind the id attribute values.

This also works for embedded ids and access to the embedded values works just like expected, by dereferencing the embeddable further i.e. alias.embeddable.property

| When using the identifiable values, only the id values are available for the query. Using any other property will lead to an exception. |

3.4. Before and after DML in CTEs

When using DML in CTEs it depends on the DBMS what state a FROM element might give.

Normally this is not problematic as it is rarely necessary to do DML and a SELECT for the same entity in one query.

When it is necessary to do that, it is strongly advised to make use of fromOld()

or fromNew() to use the state before or after side-effects happen.

For example usage and further information, take a look into the Updatable CTEs chapter

3.5. Subquery in FROM clause

In SQL, a from clause item must be a relation which is usually a table name but can also be a subquery, yet most ORMs do not support that directly.

Blaze Persistence implements support for subqueries in the FROM clause by requiring the return type of a subquery to be an entity or CTE type.

This is similar to how inlined CTEs work and in fact, under the hood, CTE builders are used to make this feature work. For more information about CTEs, go to the CTE documentation section.

Before a subquery can be constructed, one has to think of an entity or CTE type that represents the result of the subquery. Consider the following CTE entity as an example.

@CTE

@Entity

class ResultCte {

@Id

private Long id;

private String name;

}

This CTE entity can then be used as result type for a subquery in fromSubquery().

CriteriaBuilder<Long> cb = cbf.create(em, Long.class)

.fromSubquery(ResultCte.class, "r")

.from(Cat.class, "subCat")

.bind("id").select("id")

.bind("id").select("name")

.orderByDesc("age")

.orderByDesc("id")

.setMaxResults(5)

.end()

.select("r.id");

The example doesn’t really make sense, it just tries to show off the possibilities. The JPQL for such a query looks roughly like the following

SELECT r.id

FROM ResultCte(

SELECT subCat.id, subCat.name

FROM Cat subCat

ORDER BY subCat.age DESC, subCat.id DESC

LIMIT 5

) r(id, name)

Using a dedicated entity or CTE class for a subquery result and binding every attribute might make sense for some cases,

but most of the time, it is sufficient to re-bind all entity attributes again i.e. the result type matches the query root type Cat.

To help with writing such queries, the fromEntitySubquery() method can be used.

Databases are pretty good at eliminating unnecessary/unused projections in such scenarios, so it’s no big deal to use this short-cut if applicable.

CriteriaBuilder<Long> cb = cbf.create(em, Long.class)

.fromEntitySubquery(Cat.class, "r")

.orderByDesc("age")

.orderByDesc("id")

.setMaxResults(5)

.end()

.select("r.id");

will result in something like the following:

SELECT r.id

FROM Cat(

SELECT r_1.age, r_1.father.id, r_1.id, r_1.mother.id, r_1.name

FROM Cat r_1

ORDER BY r_1.age DESC, r_1.id DESC

LIMIT 5

) r(age, father.id, id, mother.id, name)

As can be seen, all owned entity attributes of the type Cat are bound again.

Apart from the fromXXX methods there is also support for joining such subqueries via

the joinOnSubquery()

and joinOnEntitySubquery()

methods or the join type specific variants.

3.6. Lateral subquery join

Blaze Persistence offers support for doing lateral joins via the methods

joinLateralOnSubquery()

and joinLateralOnEntitySubquery().

A lateral join, which might also be known as cross apply or outer apply, allows to refer to aliases on the left side of the subquery i.e. the alias of the join base.

Such a join is like a correlated subquery for the FROM clause.

CriteriaBuilder<Tuple> cb = cbf.create(em, Tuple.class)

.from(Cat.class, "c")

.leftJoinLateralOnEntitySubquery("c.kittens", "topKitten", "kitten")

.orderByDesc("age")

.orderByDesc("id")

.setMaxResults(5)

.end()

.on("1").eqExpression("1")

.end()

.select("c.name")

.select("COUNT(topKitten.id)");

The example query shows a special feature of lateral joins, which is the possibility to correlate a collection for a lateral join.

This could also have been written by correlating an entity type and defining the correlation for the collection in the WHERE clause manually.

The resulting JPQL might look like the following:

SELECT c.name, COUNT(topKitten.id)

FROM Cat c

LEFT JOIN LATERAL Cat(

SELECT kitten.age, kitten.father.id, kitten.id, kitten.mother.id, kitten.name

FROM Cat kitten

ORDER BY kitten.age DESC, kitten.id DESC

LIMIT 5

) topKitten(age, father.id, id, mother.id, name) ON 1=1

GROUP BY c.name

Note that lateral joins only work for inner and left joins. Also, not all databases support lateral joins. H2 and HSQL do not support that feature. MySQL only supports this since version 8. Oracle supports this since version 12.

4. Predicate Builder

The Predicate Builder API tries to simplify construction but also the reuse of predicates. There are multiple clauses and expressions that support entering the API:

Every predicate builder follows the same scheme:

-

An entry method can be used to start the builder with the left hand side of a predicate

-

Entry methods are additive, and finishing a predicate results in adding that to the compound predicate

-

Once a predicate has been started, it must be properly finished

-

On the top level, a method to directly set a JPQL predicate expression is provided

| Subqueries are not supported to be directly embedded into expressions but instead have to be built with the builder API. |

There are multiple different entry methods to cover all possible usage scenarios. The entry methods are mostly named after the clause in which they are defined

e.g. in the WHERE clause the entry methods are named where(), whereExists() etc.

The following list of possible entry methods refers to WHERE clause entry methods for easier readability.

where(String expression)-

Starts a builder for a predicate with the given expression on the left hand side.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.where("name").eq("Felix");

SELECT cat FROM Cat cat WHERE cat.name = :param_1

whereExists()&whereNotExists()-

Starts a subquery builder for an exists predicate.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.whereExists()

.from(Cat.class, "subCat")

.select("1")

.where("subCat").notEqExpression("cat")

.where("subCat.name").eqExpression("cat.name")

.end();

SELECT cat FROM Cat cat WHERE EXISTS (SELECT 1 FROM Cat subCat WHERE subCat <> cat AND subCat.name = cat.name)

whereCase()-

Starts a general case when builder for a predicate with the resulting case when expression on the left hand side.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.whereCase()

.when("cat.name").isNull()

.then(1)

.when("LENGTH(cat.name)").gt(10)

.then(2)

.otherwise(3)

.eqExpression(":someValue");

SELECT cat FROM Cat cat

WHERE CASE

WHEN cat.name IS NULL THEN :param_1

WHEN LENGTH(cat.name) > 10 THEN :param_2

ELSE :param_3

END = :someValue

whereSimpleCase(String expression)-

Starts a general case when builder for a predicate with the resulting case when expression on the left hand side.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.whereSimpleCase("SUBSTRING(cat.name, 1, 2)")

.when("'Dr.'", "'Doctor'")

.when("'Mr'", "'Mister'")

.otherwise("'Unknown'")

.notEqExpression("cat.fullTitle");

SELECT cat FROM Cat cat

WHERE CASE SUBSTRING(cat.name, 1, 2)

WHEN 'Dr.' THEN 'Doctor'

WHEN 'Mr.' THEN 'Mister'

ELSE 'Unknown'

END <> cat.fullTitle

whereSubquery()-

Starts a subquery builder for a predicate with the resulting subquery expression on the left hand side.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.whereSubquery()

.from(Cat.class, "subCat")

.select("subCat.name")

.where("subCat.id").eq(123)

.end()

.eqExpression("cat.name");

SELECT cat FROM Cat cat WHERE (SELECT subCat.name FROM Cat subCat WHERE subCat.id = :param_1) = cat.name

whereSubquery(String subqueryAlias, String expression)-

Like

whereSubquery()but instead theexpressionis used on the left hand side. Occurrences of subqueryAlias in the expression will be replaced by the subquery expression.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.whereSubquery("subQuery1", "subQuery1 + 10")

.from(Cat.class, "subCat")

.select("subCat.age")

.where("subCat.id").eq(123)

.end()

.gt(10);

SELECT cat FROM Cat cat WHERE (SELECT subCat.age FROM Cat subCat WHERE subCat.id = :param_1) + 10 > 10

whereSubqueries(String expression)-

Starts a subquery builder capable of handling multiple subqueries and uses the given

expressionon the left hand side of the predicate. Subqueries are started withwith(String subqueryAlias)and aliases occurring in the expression will be replaced by the respective subquery expressions.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.whereSubqueries("subQuery1 + subQuery2")

.with("subQuery1")

.from(Cat.class, "subCat")

.select("subCat.age")

.where("subCat.id").eq(123)

.end()

.with("subQuery2")

.from(Cat.class, "subCat")

.select("subCat.age")

.where("subCat.id").eq(456)

.end()

.end()

.gt(10);

SELECT cat FROM Cat cat

WHERE (SELECT subCat.age FROM Cat subCat WHERE subCat.id = :param_1)

+ (SELECT subCat.age FROM Cat subCat WHERE subCat.id = :param_2) > 10

whereOr()&whereAnd()-

Starts a builder for a nested compound predicate. Elements of that predicate are connected with

ORorANDrespectively.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.whereOr()

.where("cat.name").isNull()

.whereAnd()

.where("LENGTH(cat.name)").gt(10)

.where("cat.name").like().value("F%").noEscape()

.endAnd()

.endOr();

SELECT cat FROM Cat cat WHERE cat.name IS NULL OR LENGTH(cat.name) > :param_1 AND cat.name LIKE :param_2

setWhereExpression(String expression)-

Sets the

WHEREclause to the given JPQL predicate expression overwriting existing predicates.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.setWhereExpression("cat.name IS NULL OR LENGTH(cat.name) > 10 AND cat.name LIKE 'F%'");

SELECT cat FROM Cat cat WHERE cat.name IS NULL OR LENGTH(cat.name) > 10 AND cat.name LIKE 'F%'

setWhereExpressionSubqueries(String expression)-

A combination of

setWhereExpressionandwhereSubqueries. Sets theWHEREclause to the given JPQL predicate expression overwriting existing predicates. Subqueries replace aliases in the expression.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.setWhereExpressionSubqueries("cat.name IS NULL AND subQuery1 + subQuery2 > 10")

.with("subQuery1")

.from(Cat.class, "subCat")

.select("subCat.age")

.where("subCat.id").eq(123)

.end()

.with("subQuery2")

.from(Cat.class, "subCat")

.select("subCat.age")

.where("subCat.id").eq(456)

.end()

.end();

SELECT cat FROM Cat cat

WHERE cat.name IS NULL

AND (SELECT subCat.age FROM Cat subCat WHERE subCat.id = :param_1)

+ (SELECT subCat.age FROM Cat subCat WHERE subCat.id = :param_2) > 10

4.1. Restriction Builder

The restriction builder is used to build a predicate for an existing left hand side expression and chains to the right hand side expression. It supports all standard predicates from JPQL and expressions can be of the following types:

- Value/Parameter

-

The actual value will be registered as parameter value and a named parameter expression will be added instead. Methods that accept values typical accept arguments of type

Object. - Expression

-

A JPQL scalar expression can be anything. A path expression, literal, parameter expression, etc.

- Subquery

-

A subquery is always created via a subquery builder. Variants for replacing aliases in expressions with subqueries also exist.

Available predicates

BETWEEN&NOT BETWEEN-

The

betweenmethods expect the start value and chain to the between builder which is terminated with the end value.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.where("cat.age").between(1).and(10)

.where("cat.age").notBetween(5).and(6);

SELECT cat FROM Cat cat WHERE cat.age BETWEEN :param_1 AND :param_2 AND cat.age NOT BETWEEN :param_3 AND :param_4

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.where("cat.age").notEq(10)

.where("cat.age").ge().all()

.from(Cat.class, "subCat")

.select("subCat.age")

.end();

SELECT cat FROM Cat cat

WHERE cat.age <> :param_1

AND cat.age >= ALL(

SELECT subCat.age

FROM Cat subCat

)

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.where("cat.age").in(1, 2, 3, 4)

.where("cat.age").notIn()

.from(Cat.class, "subCat")

.select("subCat.age")

.where("subCat.name").notEqExpression("cat.name")

.end();

SELECT cat FROM Cat cat

WHERE cat.age IN (:param_1, :param_2, :param_3, :param_4)

AND cat.age NOT IN(

SELECT subCat.age

FROM Cat subCat

WHERE subCat.name <> cat.name

)

IS NULL&IS NOT NULL-

A simple null check.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.where("cat.age").isNotNull();

SELECT cat FROM Cat cat WHERE cat.age IS NOT NULL

IS EMPTY&IS NOT EMPTY-

Checks if the left hand side is empty. Only valid for path expressions that evaluate to collections.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.where("cat.kittens").isNotEmpty();

SELECT cat FROM Cat cat WHERE cat.kittens IS NOT EMPTY

MEMBER OF&NOT MEMBER OF-

Checks if the left hand side is a member of the collection typed path expression.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.where("cat.father").isNotMemberOf("cat.kittens");

SELECT cat FROM Cat cat WHERE cat.father NOT MEMBER OF cat.kittens

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.where("cat.name").like().value("Bill%").noEscape()

.where("cat.name").notLike(false).expression("'%abc%'").noEscape();

SELECT cat FROM Cat cat

WHERE cat.name LIKE :param_1

AND UPPER(cat.name) NOT LIKE UPPER('%abc%')

4.2. Case When Expression Builder

The binary predicates EQ, NOT EQ, LT, LE, GT & GE also allow to create case when expressions for the right hand side via a builder API.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.where("cat.name").eq()

.caseWhen("cat.father").isNotNull()

.thenExpression("cat.father.name")

.caseWhen("cat.mother").isNotNull()

.thenExpression("cat.mother.name")

.otherwise("Billy");

SELECT cat

FROM Cat cat

LEFT JOIN cat.father father_1

LEFT JOIN cat.mother mother_1

WHERE cat.name = CASE

WHEN father_1 IS NOT NULL

THEN father_1.name

WHEN mother_1 IS NOT NULL

THEN mother_1.name

ELSE

:param_1

END

5. Where clause

The WHERE clause has mostly been described already in the Predicate Builder chapter.

The clause is applicable to all statement types, but implicit joins are only possible in SELECT statements,

therefore it is advised to move relation access to an exists subquery in DML statements like

CriteriaBuilder<Integer> cb = cbf.update(em, Cat.class, "c")

.setExpression("age", "age + 1")

.whereExists()

.from(Cat.class, "subCat")

.where("subCat.id").eqExpression("c.id")

.where("subCat.father.name").like().value("Bill%").noEscape()

.end();

Which will roughly render to the following JPQL

UPDATE Cat c

SET c.age = c.age + 1

WHERE EXISTS(

SELECT 1

FROM Cat subCat

LEFT JOIN subCat.father father_1

WHERE subCat.id = c.id

AND father_1.name LIKE :param_1

)

5.1. Keyset pagination support

Keyset pagination or scrolling/filtering is way to efficiently paginate or scroll through a large data set. The idea of a keyset is, that every tuple can be uniquely identified by that keyset. Pagination only makes sense when the tuples in a data set are ordered and keyset pagination in contrast to offset pagination makes efficient use of the ordering property of the data set. By remembering the highest and lowest keysets of a page, it is possible to query the previous and next pages efficiently.

Apart from the transparent keyset pagination support, it is also possible to implement keyset scrolling/filtering manually.

A keyset consists of the values of the ORDER BY expressions of a tuple and the last expression must uniquely identify a tuple.

The id of an entity is not only a good candidate in general for the last expression, but also currently the only possible expression to satisfy this constraint.

The following query will order cats by their birthday and second by their id.

CriteriaBuilder<Cat> cb = cbf.create(em, Cat.class, "cat")

.orderByAsc("cat.birthday")

.orderByAsc("cat.id")

SELECT cat FROM Cat cat ORDER BY cat.birthday ASC, cat.id ASC

5.1.1. Positional keyset pagination

In order to receive only the first 10 cats you would do

List<Cat> cats = cb.setMaxResults(10)

.getResultList();

In order to receive the next cats after the last seen cat (highest keyset) with positional keyset elements you would do

Cat lastCat = cats.get(cats.size() - 1);

List<Cat> nextCats = cb.afterKeyset(lastCat.getBirthday(), lastCat.getId())

.getResultList();

which roughly translates to the following JPQL

SELECT cat FROM Cat cat

WHERE cat.birthday > :_keysetParameter_0 OR (

cat.birthday = :_keysetParameter_0 AND

cat.id > :_keysetParameter_1

)

ORDER BY cat.birthday ASC NULLS LAST, cat.id ASC NULLS LAST

The positional part roughly means that the keyset element as passed into afterKeyset()

or beforeKeyset() must match the order of the corresponding ORDER BY expressions.

Note that this is in general much more efficient than an OFFSET based paging/scrolling because this approach can scroll to the next and previous page in O(log n),

whereas using OFFSET results in a complexity of O(n), thus making it harder to get to latter pages in big data sets.

This is due to how a keyset paginated query can efficiently traverse an index on the DBMS side. Using OFFSET paging requires actually counting tuples that should be skipped which is less efficient.

Similarly to scrolling to a page that comes after a keyset, it is also possible to scroll to a page that comes before a keyset

Cat firstCat = nextCats.get(0);

List<Cat> previousCats = cb.beforeKeyset(firstCat.getBirthday(), firstCat.getId())

.getResultList();

// cats and previousCats are equal

but this time the JPQL looks differently

SELECT cat FROM Cat cat

WHERE cat.birthday < :_keysetParameter_0 OR (

cat.birthday = :_keysetParameter_0 AND

cat.id < :_keysetParameter_1

)

ORDER BY cat.birthday DESC NULLS FIRST, cat.id DESC NULLS FIRST

This is how keyset pagination works, but still, the DBMS can use the same index as before. This time, it just traverses it backwards!

5.1.2. Expression based keyset pagination

This is just like positional keyset pagination but instead of relying on the order of keyset elements and ORDER BY expressions,

this makes use of the KeysetBuilder which matches by the expression.

Cat firstCat = nextCats.get(0);

List<Cat> previousCats = cb.beforeKeyset()

.with("cat.birthday", firstCat.getBirthday())

.with("cat.id", firstCat.getId())

.end()

.getResultList();

// cats and previousCats are equal

This results in the same JPQL as seen above. It’s a matter of taste which style to choose.

5.1.3. Keyset page based keyset pagination

When using the transparent keyset pagination support through the PaginatedQueryBuilder API

with keyset extraction it is possible to get access to an extracted

KeysetPage and thus also to the highest

and lowest keysets.

These keysets can also be used for paging/scrolling although when already having access to a KeysetPage it might be better to use the

PaginatedQueryBuilder API instead.

6. Group by and having clause

The GROUP BY and HAVING clause are closely. Logically the HAVING clause is evaluated after the GROUP BY clause.

A HAVING clause does not make sense without a GROUP BY clause.

6.1. Group by

When a GROUP BY clause is used, most DBMS require that every non-aggregate expression that appears in the following clauses must also appear in the GROUP BY clause

-

SELECT -

ORDER BY -

HAVING

This is due to the fact that these clauses are logically executed after the GROUP BY clause.

Some DBMS even go as far as not allowing expressions of a certain complexity in the GROUP BY clause. For such expressions,

the property/column references have to be extracted and put into the GROUP BY clause instead, so that the composite expressions can be built after grouping.

By default, the use of complex expressions is allowed in groupBy(),

but can be disabled by turning on the compatible mode.

OpenJPA only supports path expressions and simple function expression in the GROUP BY clause

|

Currently it is not possible to have a HAVING clause when using the PaginatedCriteriaBuilder API or count query generation. Also see #616

|

6.1.1. Implicit group by generation

Fortunately all these issues with different DBMS and the GROUP BY clause is handled by Blaze Persistence through implicit group by generation.

Implicit group by generation adds just the expressions that are necessary for a query to work on a DBMS without changing it’s semantics.

The generation will kick in as soon as

-

The

GROUP BYclause is used -

An aggregate function is used

If you don’t like the group by generation or you run into a bug, you can always disable it on a per-query and per-clause basis if you like.

Let’s look at an example

CriteriaBuilder<Tuple> cb = cbf.create(em, Tuple.class)

.from(Cat.class)

.select("age")

.select("COUNT(*)");

This will result in the following JPQL query

SELECT cat.age, COUNT(*) FROM Cat cat GROUP BY cat.age

The grouping is done based on the non-aggregate expressions, in this case, it is just the age of the cat.

If you disabled the implicit group by generation for the SELECT clause, the GROUP BY clause would be missing and you’d have to add it manually like

CriteriaBuilder<Tuple> cb = cbf.create(em, Tuple.class)

.from(Cat.class)

.select("age")

.select("COUNT(*)")

.groupBy("age");

which isn’t too painful at first, but can get quite cumbersome when having many expressions.

Not using implicit group by generation for the HAVING clause when using non-trivial expression like e.g. age + 1 might lead to problems on some DBMS. MySQL for example can only handle column references in the GROUP BY and doesn’t match complex expressions for the HAVING clause.

|

Subqueries are generally not allowed in the GROUP BY clause, thus correlated properties/columns have to be extracted. Implicit group by generation also takes care of that.

|

Due to the fact that subqueries are not allowed, the SIZE() function can’t be used in this clause.

|

6.1.2. Group by Entity

Although the JPA spec mandates that a JPA provider must support grouping by an entity, it is apparently not asserted by the JPA TCK.

Some implementations don’t support this feature which is why Blaze Persistence expands an entity in the GROUP BY clause automatically for you.

This also works when relying on implicit group by generation i.e.

CriteriaBuilder<Tuple> cb = cbf.create(em, Tuple.class)

.from(Cat.class, "c")

.leftJoin("c.kittens", "kitty")

.select("c")

.select("COUNT(*)");

will result in the following logical JPQL query

SELECT c, COUNT(*) FROM Cat c LEFT JOIN c.kittens kitty GROUP BY c

but will expand c to all singular attributes of it’s type.

| Hibernate still lacks support for this feature which is one of the reasons for doing the expansion within Blaze Persistence |

6.2. Having clause

The HAVING clause is similar to the WHERE clause and most of the inner workings are described in the Predicate Builder chapter.

The only difference is that the HAVING clause in contrast to the WHERE clause can contain aggregate functions and is logically executed after the GROUP BY clause.

The API for using the HAVING clause is the same as for the WHERE clause, except that it uses having instead of the where prefix.

CriteriaBuilder<Tuple> cb = cbf.create(em, Tuple.class)

.from(Cat.class)

.select("age")

.select("COUNT(*)")

.groupBy("age")

.having("COUNT(*)").gt(2);

SELECT cat.age, COUNT(*) FROM Cat cat GROUP BY cat.age HAVING COUNT(*) > :param_1

7. Order by clause

An ORDER BY clause can be used to order the underlying result list.

Depending on the mapping and the collection type in an entity, the order of elements contained in collection may or may not be preserved.

Contrary to what the JPA spec allows, Blaze Persistence also allows to use the ORDER BY clause in subqueries.

|

By default, the use of complex expressions is allowed in orderBy(),

but can be disabled by turning on the compatible mode.

Also note that by default, Blaze Persistence chose to use the NULLS LAST behavior instead of relying on the DBMS default, in order to provide better portability.

It is strongly advised to always define the null precedence in order to get deterministic results.

For convenience Blaze Persistence also offers you shorthand methods for ordering ascending or descending that make use of the default null precedence.

CriteriaBuilder<Tuple> cb = cbf.create(em, Tuple.class)

.from(Cat.class)

.select("age")

.select("id")

.orderByAsc("age")

.orderByDesc("id");

SELECT cat.age, cat.id

FROM Cat cat

ORDER BY

cat.age ASC NULLS LAST,

cat.id DESC NULLS LAST

Apart from specifying the expression itself for an ORDER BY element, you can also refer to a select alias.

This is also the only way to order by the result of a subquery. Many DBMS do not support the occurrence of a subquery in ORDER BY directly, so Blaze Persistence dos not allow to do that either.

|

CriteriaBuilder<Tuple> cb = cbf.create(em, Tuple.class)

.from(Cat.class)

.selectSubquery("olderCatCount")

.from(Cat.class, "subCat")